The Spreadsheet Problem

There’s a moment in every AI implementation where the demo becomes operational.

The model works. The predictions are good. Then someone asks: “How do we actually use this in production?”

This is the spreadsheet problem. AI predictions get routed into spreadsheets: brittle, informal tools never designed for continuous, machine-speed execution. They work. Until they don’t. And when they break, it’s not the model that failed, it’s the infrastructure that never evolved beyond Excel.

And the answer, more often than anyone wants to admit, is: “We’ll put it in a spreadsheet.”

This works for a while. Then it doesn’t.

And when it breaks, it doesn’t break because the AI failed. It breaks because the infrastructure holding it together was never built for what’s being asked of it.

The pattern that repeats

Every time computing crosses from advisory to operational, an execution substrate emerges to manage the transition.

Accounting moved from ledgers to operations. Double-entry bookkeeping became mandatory once financial decisions drove resource allocation.

Software development moved from personal craft to coordinated engineering. Version control emerged because deploying code without provenance became organizationally untenable.

Cloud infrastructure moved from provisioned servers to elastic systems. Orchestration platforms emerged as the only way to manage complexity at scale.

Payments moved from integrated systems to distributed APIs. Rails like Stripe formalized the layer because rebuilding compliance, versioning, and audit trails was non-differentiating work with catastrophic downside.

Data moved from application byproduct to strategic asset. Warehouses and pipelines became infrastructure because governed, reusable analytics required a separate execution substrate.

AI is crossing that boundary now.

Prediction is becoming execution.

What’s actually changing

For decades, software digitized information. Systems stored what happened: transactions, events, states, records.

Now AI is digitizing decisions.

Systems of record captured what happened. Systems of decision determine what happens next.

Information needs storage, retrieval, and consistency.

Decisions need reproducibility, versioning, auditability, and governance.

The next system of record won’t be data. It will be logic.

And logic requires something data never did: accountable computation.

When prediction meets execution

AI deployments split into two phases:

Phase 1: Advisory The model predicts. Humans decide. The output is analysis.

Phase 2: Operational The model predicts. Systems execute. The output drives business logic.

Phase 1 is where most AI lives today. Phase 2 is where the value actually concentrates.

The problem is that moving from phase 1 to phase 2 requires infrastructure that doesn’t exist yet.

A demand forecast from an AI model is useful.

A demand forecast that automatically adjusts pricing, triggers procurement orders, reallocates inventory across warehouses, and updates financial projections is operational.

That transition doesn’t happen in the model. It happens in the layer that translates prediction into executed logic.

Right now, that layer is improvised.

The containers for logic problem

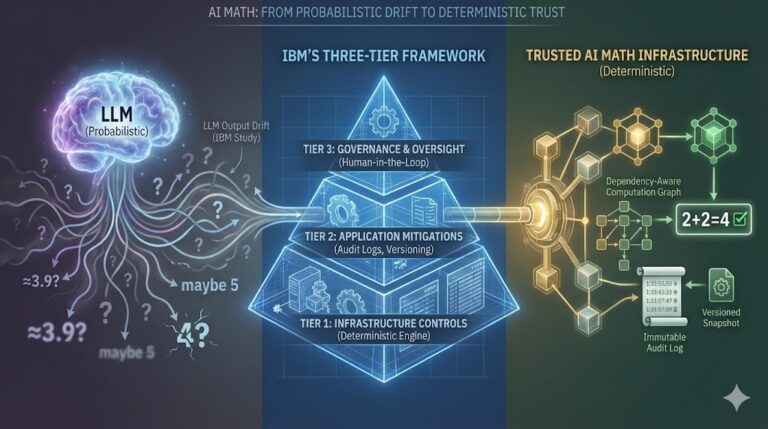

Organizations currently store computational logic in three places:

Spreadsheets store logic informally. Flexible, accessible, impossible to version or govern at scale.

Code stores logic rigidly. Precise, but fragmented across applications. Changes require deployment. Reproducibility requires infrastructure most teams don’t maintain.

Models infer logic probabilistically. Powerful, but non-deterministic. You can’t audit what you can’t reproduce.

What’s missing is a way to store logic as governed computation: deterministic, versioned, auditable, reusable across systems.

That’s not a tooling gap. It’s a layer gap.

Why now, not eventually

Enterprise math has been messy for decades. Spreadsheets have been fragile since VisiCalc. Custom code has always drifted.

So why does the computation layer become inevitable now instead of remaining survivable indefinitely?

Because operational math used to run at human speed. Now it runs at machine speed.

At human speed:

- Humans reviewed calculations before execution

- Decisions were periodic (quarterly pricing, annual budgets)

- Errors were local and containable

- Logic changed slowly enough to manage informally

At machine speed:

- Systems execute continuously without review

- AI generates decisions at scale (thousands per second)

- Errors propagate instantly across integrated systems

- Logic updates constantly as models retrain and rules evolve

This compresses the tolerance window to zero.

A pricing error caught in quarterly review is fixable. A pricing error executing 10,000 times per day across five systems is a crisis.

A budget model updated annually can live in a spreadsheet. A dynamic allocation model retraining weekly and triggering procurement automatically cannot.

The shift from human-speed to machine-speed execution is what makes informal computation governance organizationally untenable.

Not eventually. Now.

The question isn’t whether this infrastructure will emerge. It’s what breaks first when it doesn’t.

Reach out: bill.kelly@truemath.ai

Learn more: truemath.ai

Sign up for early access: https://app.truemath.ai/signup